For many, music elicits an emotional response affecting how we feel, think, and act. With our current understanding, the exact way in which our brain and body responds to music remains an area of focus for researchers.

In a new study, a group of psychologists and computer scientists at the University of Southern California worked in conjunction to establish how and why our brain reacts to music.

Researchers recruited a set of participants, examining their heart rate, sweat gland activity, brain activity, and emotional responses to a few unfamiliar musical tracks.

In the findings, it was noted that the Heschls’ gyrus and the superior temporal gyrus, an area of the brain located in the auditory complex, was significantly influenced by the musical tracks. In addition, certain factors such as rhythm, dynamics, register, and harmony, were considered musical predictors in the responses of the participants.

“Taking a holistic view of music perception, using all different kinds of musical predictors, gives us an unprecedented insight into how our bodies and brains respond to music,” said Tim Greer, co-author of the study.

The study also found an increase associated with galvanic skin response at the introduction of a new instrument or beginning of a musical crescendo. A track with an increased complexity, or more instruments, was correlated with a stronger response, according to the findings.

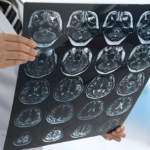

In a separate experiment, researchers examined the magnetic resonance imaging brain scans of 40 participants while they listened through a pair of headphones to three emotionally-based musical tracks with no lyrical content.

To measure emotionality, the participants rated their experiences on a scale of 1 to 10. Researchers also measured physical reactions through heart activity and skin conductance. Using artificial intelligence, researchers inputted the data into algorithms able to establish the auditory features which were the most consistent.

Shrikanth Narayanan, co-author of the study, concluded: “Novel multimodal computing approaches help not just illuminate human affective experiences to music at the brain and body level, but in connecting them to how actually individuals feel and articulate their experiences.”

“Using this research, we can design musical stimuli for therapy in depression and other mood disorders. It also helps us understand how emotions are processed in the brain.”

The findings were presented at the 27th ACM International Conference on Multimedia.